The dominant narrative in AI investing in 2025 has been NVIDIA. Every earnings call, every hyperscaler CapEx announcement gets filtered through one question: how many H100s or B200s are being deployed. That framing is not wrong. It is incomplete. The GPU is the engine. High Bandwidth Memory is the fuel system. And the fuel system is constrained in ways the market is only beginning to price.

Today we are establishing the MWA HBM and AI Infrastructure Tactical Sleeve — a set of positions built around a single structural thesis: every dollar of hyperscaler capital expenditure flows through a specific set of memory, interconnect, storage, and semiconductor equipment companies before it reaches the income statements of the headline AI names. This sleeve is implemented selectively for clients who have elected to participate based on their individual objectives and risk tolerance. Not all clients are invested in this strategy.

HBM is not a commodity memory upgrade. It is the architectural prerequisite for AI compute at scale — and the supply chain delivering it is running behind demand.

The demand math is straightforward. An H100 requires 80GB of HBM3. A B200 requires 192GB. As cluster sizes scale and inference workloads compound, the memory requirement per accelerator grows with them. The five major hyperscalers — Microsoft, Google, Amazon, Meta, Oracle — are all running multi-year GPU cluster buildouts with no deceleration visible in forward CapEx guidance. That is not a quarterly signal. It is a structural procurement cycle with no visible ceiling.

Supply is where the opportunity lives. HBM production requires advanced process capability that only three manufacturers can execute at volume: SK Hynix, Samsung, and Micron. As of Q2 2025, SK Hynix held 62% of the HBM market by shipments — the first time in history it surpassed Samsung in overall memory revenue. Samsung had fallen to 17% after failing NVIDIA's quality certification on HBM3E. Micron, the U.S. entrant, had taken 21% and was accelerating. The constraint has not resolved. Manufacturers are converting standard DRAM capacity to HBM, but that conversion cycle runs 12 to 18 months, and demand growth is outpacing it. SK Hynix has confirmed its entire HBM, DRAM, and NAND capacity is sold out into 2026. When a supplier is rationing allocation, the pricing signal is unambiguous.

| Indicator | SNDK | MU | LITE | CIEN | STX | AMAT | CRDO |

|---|---|---|---|---|---|---|---|

| Weight | 20% | 20% | 15% | 15% | 10% | 10% | 10% |

| Entry Price | $90.09 | $157.77 | $168.77 | $135.90 | $211.12 | $170.93 | $163.98 |

| YTD Return | +87.1% | +80.7% | +97.2% | +63.3% | +144.4% | +4.3% | +131.2% |

| Last Revenue | $1.90B | $9.30B | $480.7M | $1.22B | $2.44B | $7.30B | $223.1M |

| Revenue YoY | +8% | +37% | +56% | +29% | +30% | +8% | +274% |

| Role | NAND / Storage | HBM3E | Optical IC | Optical Net | HDD Storage | Semi Equip | AI Connectivity |

The two heaviest positions — SNDK and MU — represent 40% of the sleeve and carry our highest conviction on the memory supercycle directly. The remaining five names cover the infrastructure layers that memory alone cannot explain: optical bandwidth, storage depth, equipment, and connectivity. Below is the fundamental and technical case for each.

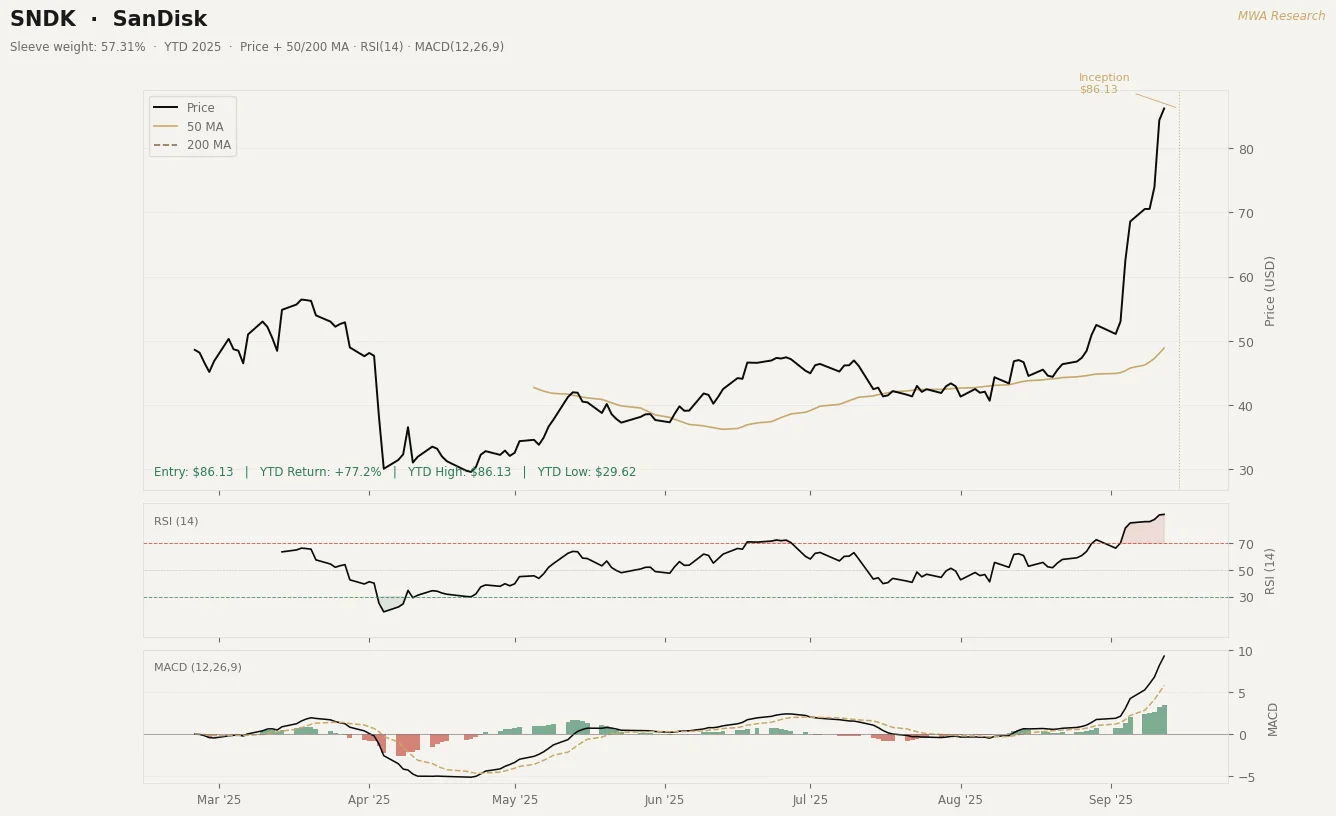

SanDisk spun out of Western Digital on February 25, 2025 and now trades as a pure-play NAND flash company. The separation matters: the market was valuing SanDisk's flash business at a discount inside WDC's HDD-dominated multiple. As a standalone, the re-rating has begun — the stock is up 87.1% from its first trading day to our entry. Q4 FY2025 revenue was $1.90 billion, up 12% sequentially and 8% year over year — a trough quarter by design, as the company deliberately constrained supply to stabilize NAND pricing. The forward trajectory is what we are buying. Q1 FY2026 guidance came in at $2.10 to $2.20 billion. Q2 FY2026 guidance was $2.55 to $2.65 billion — a 40% revenue step-up in two quarters. Datacenter revenue in Q1 was up 26% sequentially, with two hyperscalers in active SSD qualification and a third planned for calendar 2026. BiCS8, the company's latest NAND node, represented 15% of bit production entering Q1 and is expected to reach majority of production by end of FY2026. As that mix shift occurs, gross margins expand structurally. AI training and inference at scale generates more data than any prior compute cycle. High-capacity SSD demand scales with it. SNDK is the dedicated, unencumbered vehicle for that demand.

Micron is the U.S.-based HBM producer and the most direct expression of the HBM supply thesis in the sleeve. The stock is up 80.7% year to date at our entry. Q3 FY2025 revenue was a record $9.30 billion, up 37% year over year. HBM revenue grew nearly 50% sequentially within that quarter alone. Data center revenue more than doubled year over year. Q4 FY2025 guidance is $10.70 billion at the midpoint — another 15% sequential step-up, which would represent a fourth consecutive record quarter. Micron has secured the number two position in HBM market share at 21%, overtaking Samsung, and is the only HBM producer with meaningful volume ramp outside Korea. The capex commitment is explicit: management confirmed the overwhelming majority of fiscal 2025 capex — approximately $14 billion — is directed at HBM expansion. Gross margin has been expanding as HBM, a structurally higher-margin product than standard DRAM, grows as a share of total output.

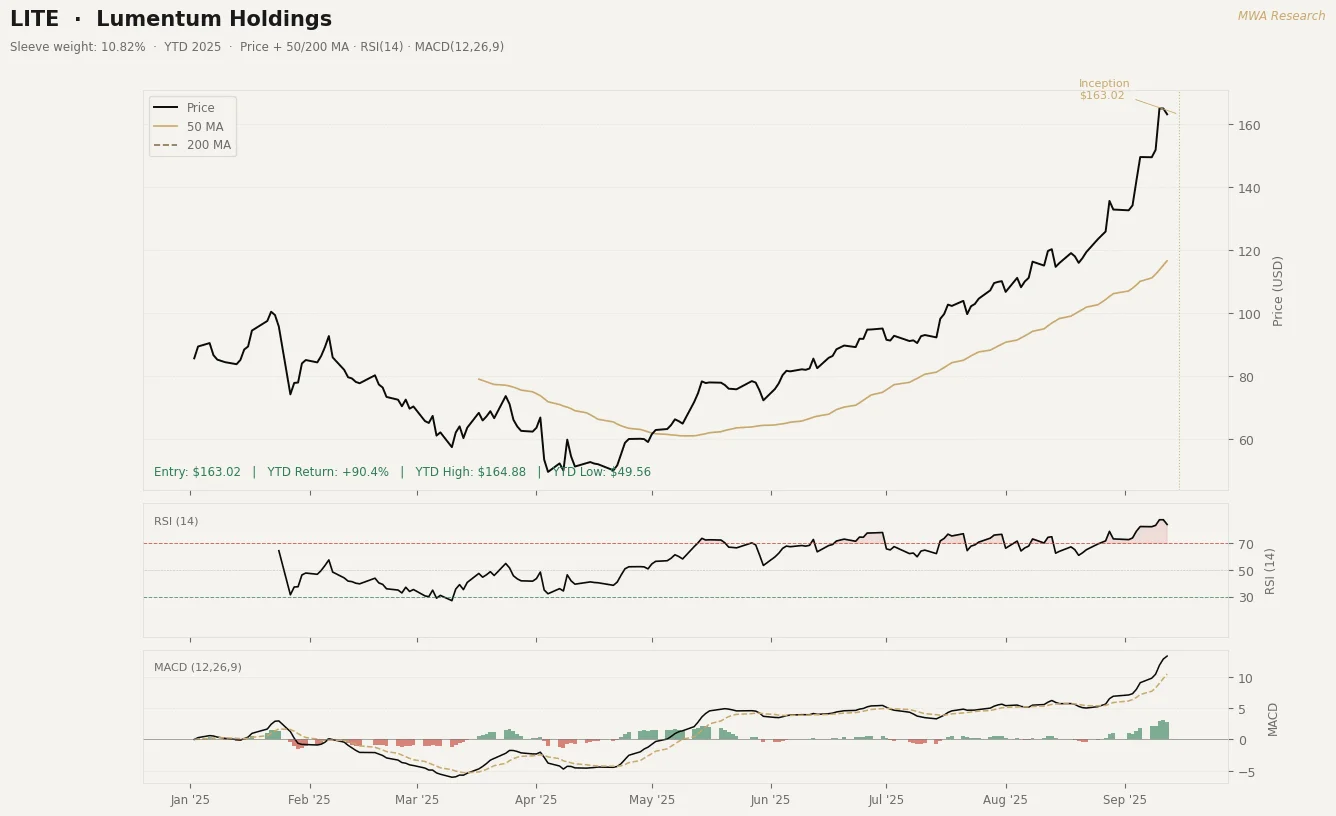

Lumentum is the optical interconnect play. As GPU cluster sizes scale, the bandwidth requirements between nodes drive demand for high-speed coherent optical components that sit entirely outside the memory conversation. The stock is up 97.2% year to date at our entry. Q4 FY2025 revenue was $480.7 million, up 56% year over year. Full fiscal year 2025 revenue was $1.65 billion, up 21% from FY2024. The company has publicly targeted $500 million in quarterly revenue by end of calendar 2025 — a target they are on track to hit based on the Q1 FY2026 setup. Non-GAAP EPS turned positive in Q4 FY2025 at $0.88 per share, against a loss of $0.13 in the same quarter last year. Cloud customers have now overtaken telecom as the dominant revenue driver — the transition we want to own. Q1 FY2026 guidance implies more than 20% sequential revenue growth before meaningful contributions from optical circuit switches and co-packaged optics, two of its next stated growth engines.

Ciena reported the freshest data in the sleeve — Q3 FY2025 results came out September 4, eleven days ago. The stock is up 63.3% year to date at our entry. Revenue was $1.22 billion, up 29% year over year and above the top of guidance. Adjusted EPS grew 91% year over year to $0.67. The order book set a new all-time quarterly record, driven by cloud providers and AI infrastructure builds. A major hyperscaler placed its first large order for 400ZR+ pluggables, establishing Ciena as lead supplier for that technology. Management guided approximately 17% revenue growth for FY2026 with 43% gross margin and accelerated their operating margin target by one year. The order book sitting above revenue at a record level is the leading indicator we care about — it means the revenue recognition is ahead of us, not behind us.

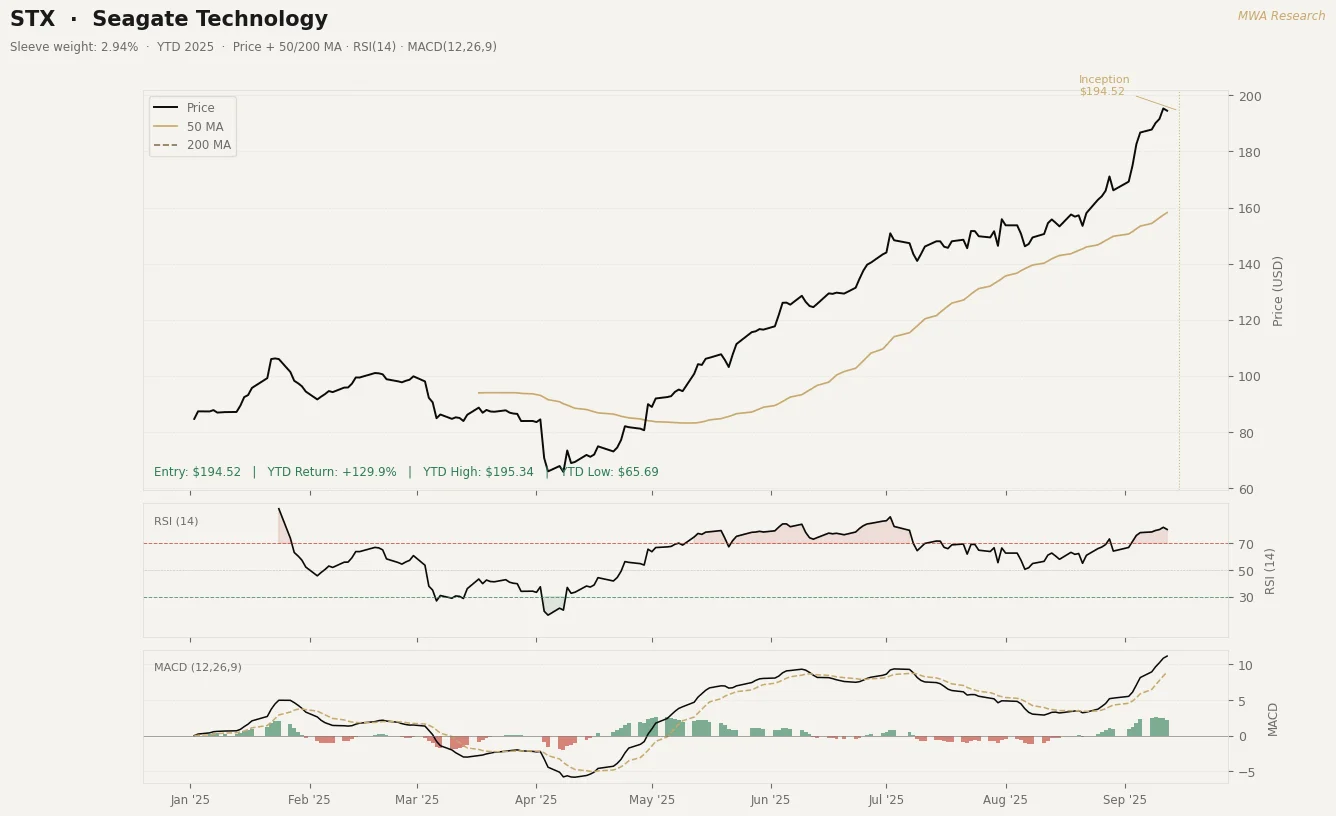

Seagate is the high-capacity HDD storage position. The stock is up 144.4% year to date at our entry — the strongest price performance in the sleeve. Q4 FY2025 revenue was $2.44 billion, up 30% year over year. Full fiscal year 2025 revenue reached $9.1 billion, up 39% — the company's strongest annual performance. Nearline cloud shipments were up 52% year over year in Q4 as hyperscalers filled storage infrastructure behind their GPU cluster buildouts. The HAMR platform — Heat-Assisted Magnetic Recording, enabling 3TB per disk — is in active customer qualification and represents the next areal density step that extends Seagate's cost and capacity lead over flash for cold-tier AI data storage. Q1 FY2026 guidance is $2.50 billion at the midpoint, another 15% year-over-year increase. This is not a cyclical storage trade. It is a position on where AI-generated data lands after it leaves the GPU cluster.

Applied Materials is the equipment angle. Every HBM capacity conversion requires etch, deposition, and CMP tooling that flows through AMAT's semiconductor systems segment. The stock is up 4.3% year to date at our entry — the most range-bound name in the sleeve, and deliberately so. Q3 FY2025 revenue was a record $7.30 billion, up 8% year over year — the company's sixth consecutive year of revenue growth. DRAM, the segment that includes HBM process steps, represents 22% of semiconductor systems revenue, and management has been explicit that HBM-related tooling is the primary growth driver within it. Non-GAAP EPS grew 17% year over year to a record $2.48. The equipment cycle lags the memory buildout by 12 to 18 months, which means AMAT captures demand that was contracted when SK Hynix and Micron committed to HBM expansion in late 2024. That capex is flowing through now. At 16x forward earnings on a sixth consecutive year of revenue growth, this is the most defensible valuation in the sleeve.

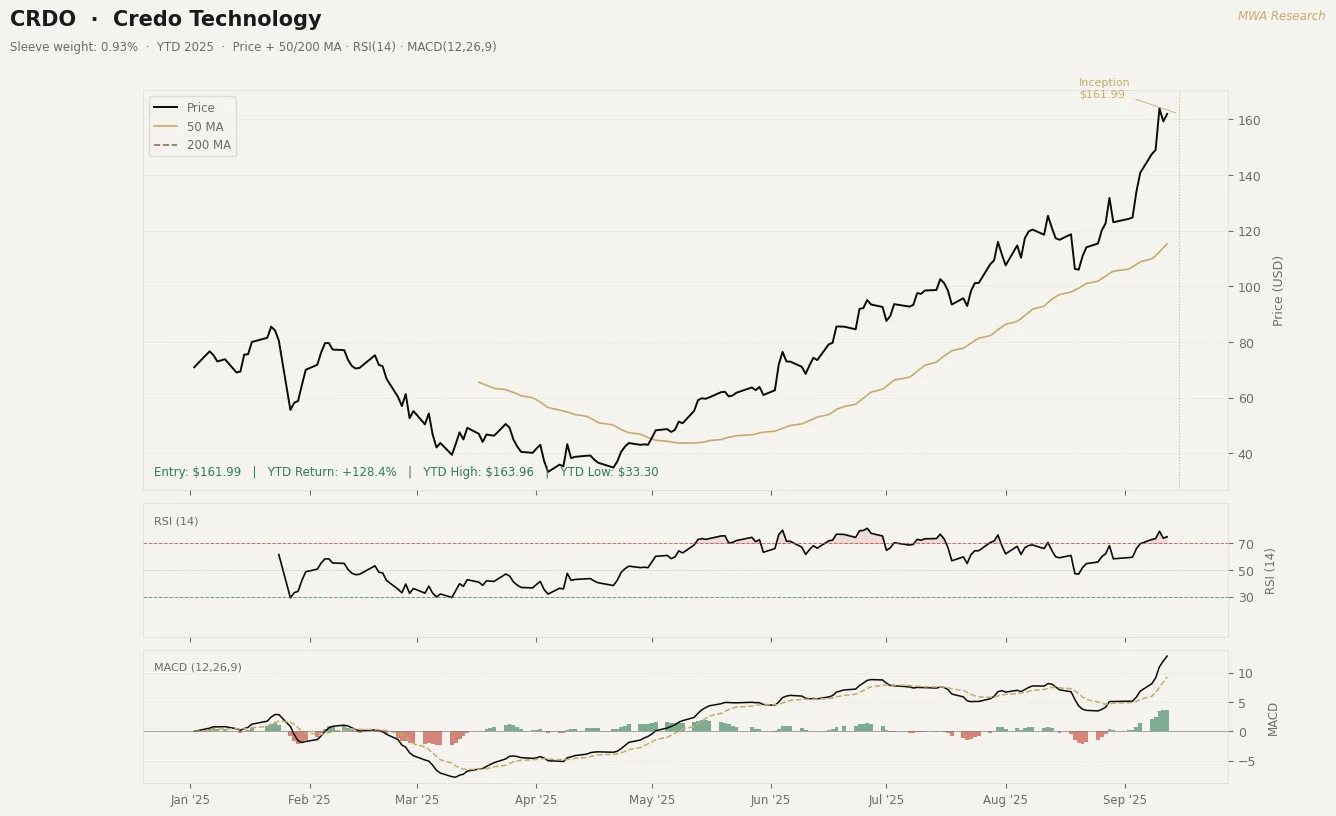

Credo Technology is the highest growth velocity name in the sleeve. The stock is up 131.2% year to date at our entry. Q1 FY2026 revenue — reported September 3, twelve days ago — was $223 million, up 274% year over year and 31% sequentially. Non-GAAP gross margin was 67.6%. Non-GAAP net income was $98.3 million on $223 million in revenue — a 44% net margin at scale that most semiconductor companies never reach. The product is Active Electrical Cable technology, which solves the power and signal integrity problem of connecting GPU clusters at density. Three hyperscalers now each represent over 10% of revenue, and the largest single customer has declined from 86% to 35% of revenue — a concentration risk that is actively resolving in real time. Full fiscal year 2026 guidance implies approximately 120% revenue growth. The position is sized at 10% of the sleeve to reflect the volatility profile alongside the conviction level.

The consensus view treats this entire category as derivative AI exposure — interesting, but secondary to NVIDIA and the hyperscalers themselves. We disagree with that framing. In a constrained supply environment, the memory and interconnect layer captures margin that the system integrators cannot. NVIDIA prices the accelerator. The HBM manufacturer, the optical supplier, the storage provider — these are the companies with pricing power on the inputs that make the accelerator worth buying. That is where we want to be positioned for the next 18 months.

The market is pricing these companies on prior-cycle DRAM and storage comps. The revenue that matters is HBM and AI infrastructure CapEx — and those are not the same number.